In continuation to the series on “

Architecting vSphere Environments“, this post talks about Architecting the storage for a vSphere Infrastructure. For those who have not read the previous parts of this series, I would highly recommend you go through them in order to get a complete picture on the considerations which matter the most while designing the various components of a vSphere Infrastructure.

Here are the links to the parts written before:-

Considering you have read the other parts, you would know that I have been talking about “Key Considerations” only in this series of articles, hence this post would be no different and would only talk about the most important points to keep in mind while designing the STORAGE architecture on which you will run your virtual machines. As mentioned before this comes from my experiences which I have gained from the field and from advises which I have read & discussed with a lot of Gurus in the VMware community.

With the changes in the storage arena in the past 2 to 3 years, I will not only talk about traditional storage design, but would also throw in some advice on the strategy of adopting Software Defined Storage (SDS). The slide below indicates the same.

I would start with talking about the Traditional Storage and the key areas. Let’s begin with talking about IOPS (Input/Output Per Second). Have a look at the slide below.

The credit for the numbers and formula shown in the above slide goes to

Duncan Epping. He has a great article which explains IOPS. Probably the first search result on Google if you search for the keyword “IOPS”. I included this into my presentation because this is still the most miscalculated and ignored area in more than 50% of virtual infrastructures. In my experience only 2 out of 10 customers I meet discuss IOPS. Such facts worry me as deep inside I know that someday the Virtual Infrastructure would come down like a pack of cards if the storage is not sized appropriately. With this let’s look at some of the key areas around IOPS.

-

Size for Performance & Not Capacity – Your storage array cannot be sized for the capacity of data which you need to store. You would always have to size for the Performance which you need. In 90% of the cases you would need to buy more disks than you need, in order to make sure that you meet the IOPS requirements of the workloads which you are planning to run on a Volume/LUN/Datastore.

-

Front End vs. Back End IOPS – This is the most common mistake which is committed while sizing storage. Though your intent might be correct to size the storage on the basis of workload requirements, please remember that workload demands FRONT-END IOPS while Storage Arrays provides BACK-END IOPS. In order to convert Front-end IOPS you need to consider Read/Write ratio of IOPS, the type of disk being used (i.e. SSD, SAS, SATA etc) and finally the RAID Penalty. I have explained this concept with the formula above (Courtesy –

Duncan Epping)

An application owner asks you for 1000 IOPS for a workload which has 40% Reads and 60% Writes. These 1000 IOPS are front end IOPS. Here is how you will determine the Back-end IOPS on the basis of which you will architect the Volume/Datastore or may be buy disks in case you are into the procurement cycles.

Back-End IOPS = (Front-End IOPS X % READ) + ((Front-End IOPS X % Write) X RAID Penalty)

The RAID Penalty is shown in the slide above along with the number of IOPS which you receive with different types of disks available for a storage array today. So considering our example, if we are choosing RAID 5 for this workload, the raid penalty would be 4 IOPS. Let’s do the math now:-

Back-End IOPS = (1000 X 40%) + ((1000 X 60%) X 4)

= (1000 X 0.4) + ((1000 X 0.6) X 4)

= (400) + (600 X 4)

= 400 + 2400

= 2800 IOPS

So you can Clearly see that 1000 Front-End IOPS mean 2800 Back-End IOPS. That is 2.8 times the actual requirement. While you can get 1000 IOPS from just 7, 15k RPM SAS drives, you need a whopping 17 Disks to get 100 Front-End IOPS.

I hope that gives you a clear picture on the fact that you not only need to size for performance, you also need to size correctly for performance, since storage can make or break your virtual infrastructure. If you don’t believe ask the VMware Technical Support team the next time you speak to them. A whopping 80% of the performance issue case which they deal with are related to a poorly designed storage.

Along with IOPS let us see a few more areas where we need to be cautious.

- DO NOT simply upgrade from an older version of VMFS to a new version. Please note that I am referring to major releases only. In-fact to be more precise I am referring to an upgrade of VMFS 3.X to VMFS 5.X. For those who follow VMFS (Proprietary Virtual Machine File System developed by VMware) would know that VMFS 5.x was introduced with vSphere 5.0. Prior to this the version of VMFS was 3.x. VMware has done some major changes to VMFS 5.0 which changes the way the blocks and metadata functions. If you are upgrading from vSphere 4.1 or before to vSphere 5.x, then I would highly recommend to re-format the datastores and create them afresh to get the latest version of VMFS. An in-place upgrade from VMFS 3.x to 5.x would bring in the legacy features of the file-system and that can have a performance impact on operations such as vMotion & SCSI locking. You should empty each datastore by using Storage vMotion format it and then move the workloads back on it. Time consuming but absolutely worth it.

-

If you are using IP based storage, then you should have a separate IP fabric for the transport of storage data. This ensures security due to isolation and high performance since you have a dedicated network card / switching gear for storage. This is a very basic recommendation and people tend to overlook it. Please invest here and you would see that IP storage working at par with FC Storage.

-

Storage DRS is cool but should be partially used if you have a storage with Auto-Tiered disks. Auto-Tiering is usually available in the newer arrays and allows workloads to access HOT data (frequently used) off the fastest disks (SSD), while the cool data (not frequently used) is staged off to slower spindles such as SAS and SATA. The IO metric feature available within Storage DRS is made to improve the performance for NON-AUTO Tiered storage only. Hence, if you have an Auto-Tiered Array, please disable this feature since it will not do any good and simply become an overhead. Having said that, SDRS also gives you the feature of automatically placing virtual machines when they are first created on an appropriate datastore on the basis of capacity and performance requirements (with storage profiles). Hence use the Auto Tier feature of your storage to manage performance while use SDRS only for initial placement of VM.

-

IOPS help you size, but throughput and multipathing calculations are also critical since they are the bridge between the source (servers) & the destination (Spindles). make sure you have the correct path policies and appropriate amount of throughput, qdepth for smooth transmission of data packets across fabric.

-

Coming to the last point, it is important to understand the nuances of the VMFS file system. Thin vs Thick disks, Thin on Thick or Thick on Thick etc are various considerations which you need to keep in mind. I would recommend you read this excellent VMware blog article written by

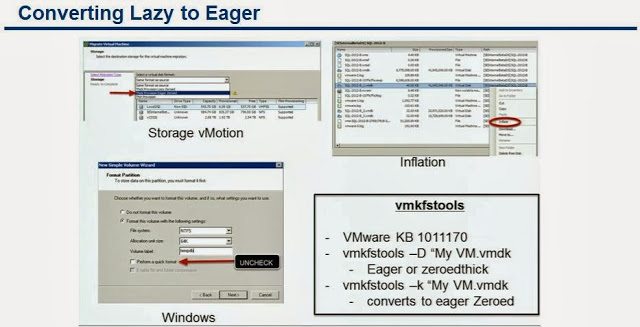

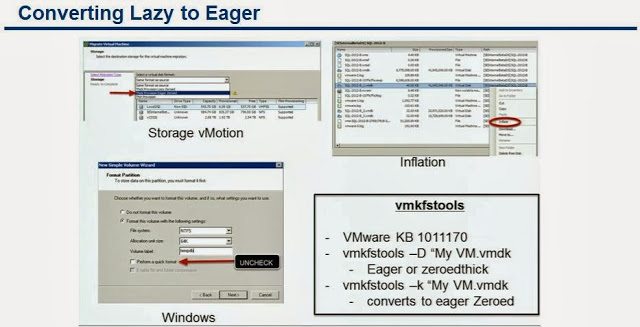

Cormac Hogan, which talks about almost all the possible file system combinations which one can have. My 2 cents on this would be to keep things simple and to use EAGERED ZEROED THICK VMDKs for all your latency sensitive virtual machines. This will save you that extra time which ESXi takes to zero down the blocks before it could write new data in case of LAZY ZEROED THICK VMDKs. You do not have to panic in case you are reading this now and already have your latency sensitive workloads running on Lazy Zeroed Disks. There are a number of ways by which you can convert the existing Lazy Zeroed Disks to Eagered Zero. The slide below shows all the options.

Finally let’s quickly move on to the things from the New World of Software Defined everything. Yesssss.. I am talking about Storage of the new era a.k.a. SOFTWARE DEFINED STORAGE (SDS). If you have a good memory, you would remember that I told you to size your storage for Performance and not Capacity. I am taking back my words right away and would want to tell you that with Software Defined Storage, you no longer have to Size for Performance. You just need to Size for Capacity and Availability.

Let’s have a look at the slide below and then we will discuss the key areas of software defined storage as conceptualized by VMware.

The above slide clearly indicates the 2 basic solutions from VMware in the arena of Software Defined Storage. The first is vFRC (vSphere Flash Read Cache) which allows you to pool cache either from SSD or PCI Cards as the first read device, hence improving read performance for read intensive workloads such as Collaboration Apps, Databases etc. Imagine this as ripping of the cache from your storage array and bringing it close to the ESXi server. This cuts down the travel path and hence improves the performance incredibly.

Another feather in the cap of Software Defined Storage is VSAN. As

Rawlinson of Punching Clouds fame always says, it’s VSAN not with a small “v” like other VMware products. Only he knows the secret behind the uppercase “V” of VSAN. Earlier this year I met Rawlinson at a technology event in Malaysia and he gave me some great insights on VSAN.

VSAN uses the local disks installed on an ESXi server which has to be combination of SSD + SAS or SATA drives. The entire storage from all the ESXi hosts in a cluster (currently the limit is minimum 3 and maximum 8 nodes) is pooled together in 2 tiers. The SSD tier for Performance and the SAS/SATA tier for capacity. To read more about VSAN I would suggest you to read a series of articles written by

Duncan available on this link and

Cormac on this link.

Remember VSAN is in beta right now, hence you can look at trying it for the use cases which I have mentioned in the slide above and not for your core production machines.

With this I will close this article and I hope this will guide you to make the right choices for choosing, architecting and using storage be it traditional or modern for your vSphere Environments. feel free to comment and share your thoughts and opinions around this post.

Share & Spread the Knowledge!!

Nice read, good details. Good starting point for someone who is just getting in.

LikeLike

Wrote this one for you 😉

LikeLike